AI Voices Explained

How neural networks learn to speak — and why today's AI voices sound so natural.

What makes a voice sound "natural"?

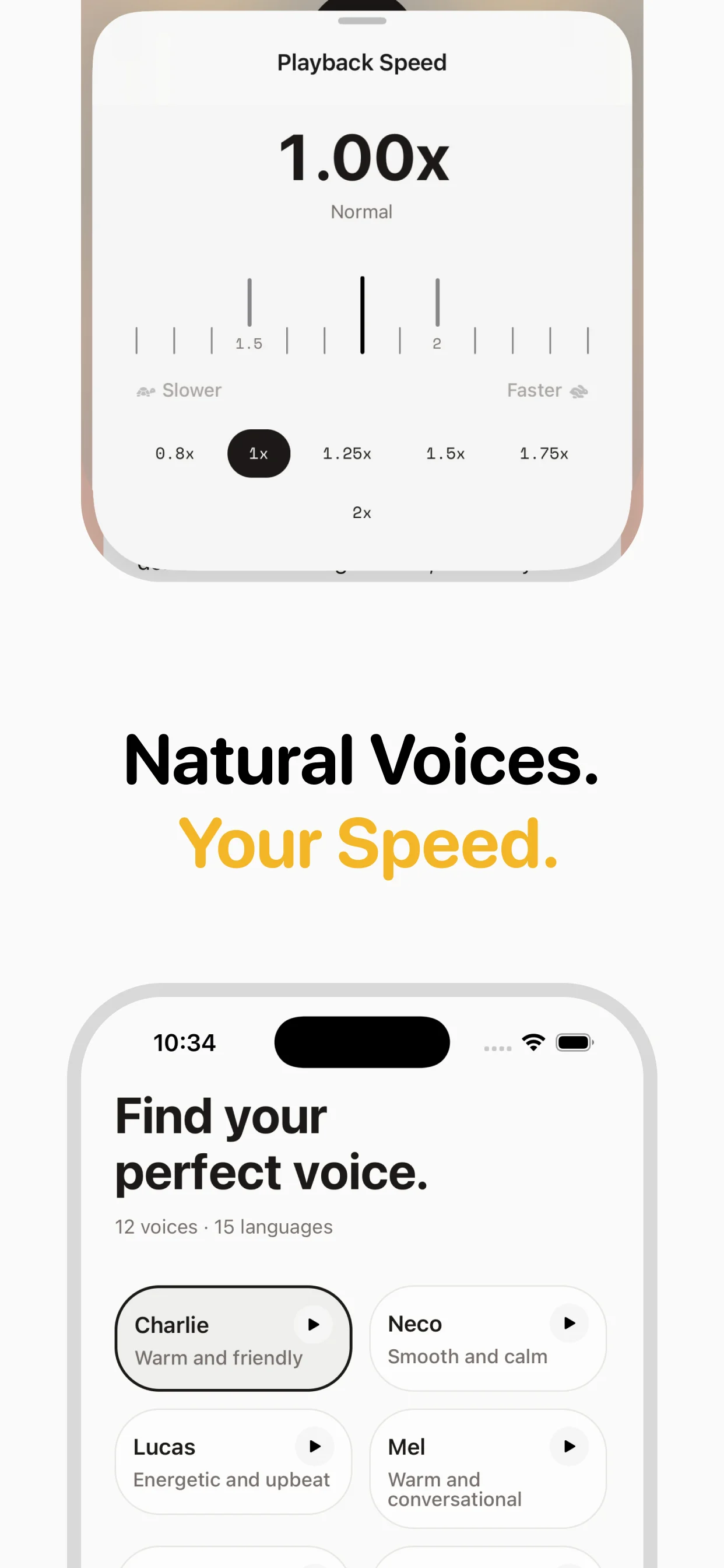

Natural speech is incredibly complex. Beyond pronouncing words correctly, humans vary their pitch by 50-100Hz within a single sentence. We pause before important points, speed up through familiar phrases, and subtly adjust our tone for questions, statements, and emphasis. We breathe. We have speaking quirks. Early TTS systems missed all of this — they got the words right but the delivery wrong. Modern AI voices capture these nuances because they learn from real speech rather than following programmed rules.

Training an AI voice

Creating a neural voice starts with a dataset: typically 10-40 hours of high-quality recorded speech from a single speaker, transcribed and aligned at the phoneme level. The model learns the mapping between text (as phoneme sequences) and audio features. During training, it picks up the speaker's unique vocal characteristics: timbre, accent, speaking rhythm, and how they handle different types of content. Modern architectures like VITS, XTTS, and proprietary models from InWorld and OpenAI can learn a convincing voice from as little as 10 minutes of audio, though more data produces better results.

Zero-shot and few-shot voice cloning

Recent advances allow creating new voices from very short samples — sometimes just a few seconds. Zero-shot voice cloning models (like OpenAI's) encode a speaker's voice characteristics from a reference sample, then apply those characteristics when synthesizing any text. This means thousands of distinct voices can be created without thousands of hours of training data. The quality trade-off is real, though: voices trained on extensive data still sound more consistent and natural than few-shot clones.

Voice quality metrics

The industry measures voice quality using Mean Opinion Score (MOS) — human listeners rate samples on a 1-5 scale. Real human speech scores around 4.5-4.8. The best current neural TTS voices score 4.0-4.5, a dramatic improvement from 2.5-3.0 just five years ago. Other metrics include Word Error Rate (WER) — how often the system mispronounces words — and prosody naturalness scores. InWorld's voices, used by speakeasy, consistently score above 4.0 MOS, making them suitable for long-form listening where voice quality fatigue is a real concern.

Ethical considerations

AI voice technology raises important ethical questions. Voice cloning can be misused for deepfakes and impersonation. Responsible providers like InWorld and OpenAI use consent-based voice creation and have policies against generating voices that mimic real people without permission. speakeasy uses licensed, purpose-built voices rather than clones of real individuals. The industry is moving toward watermarking AI-generated audio and establishing clear disclosure standards.

Frequently asked questions

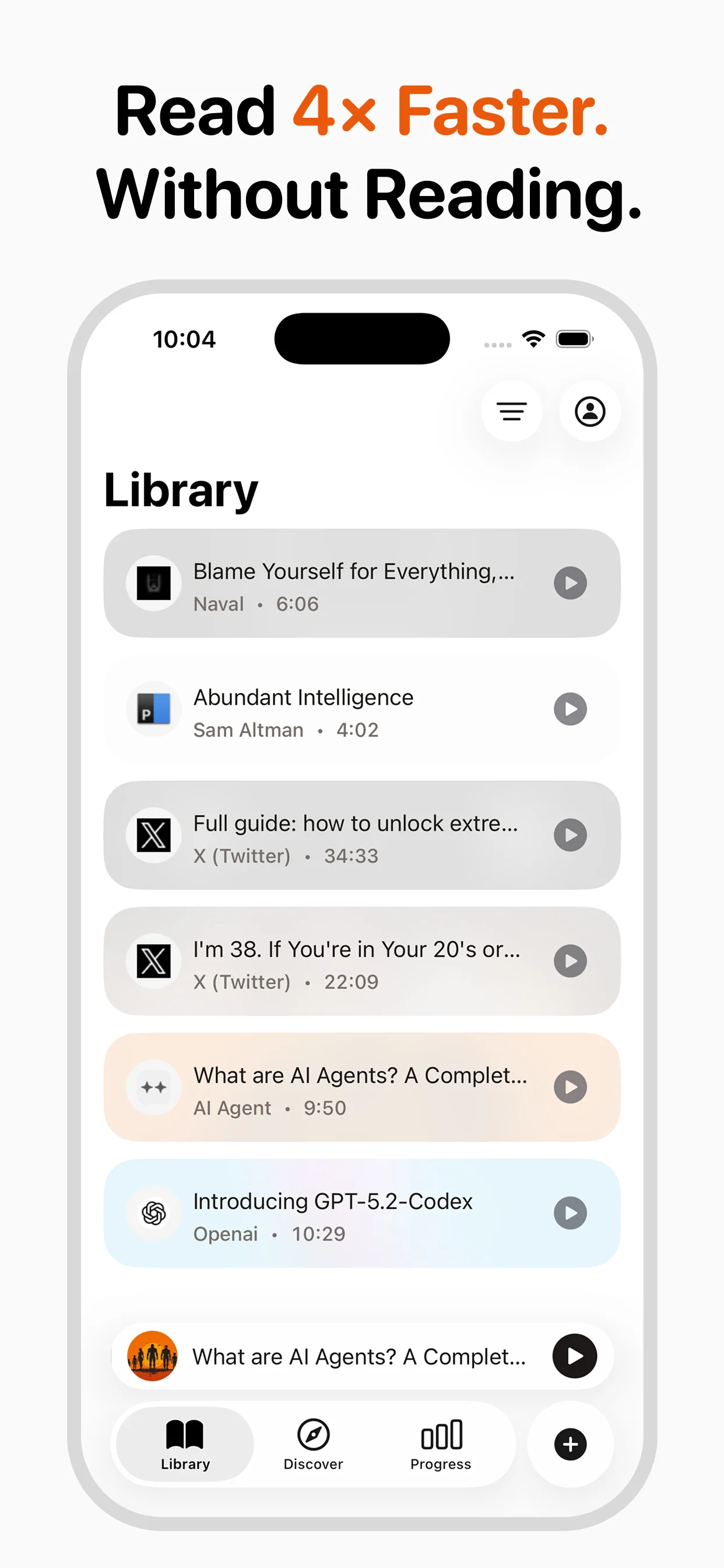

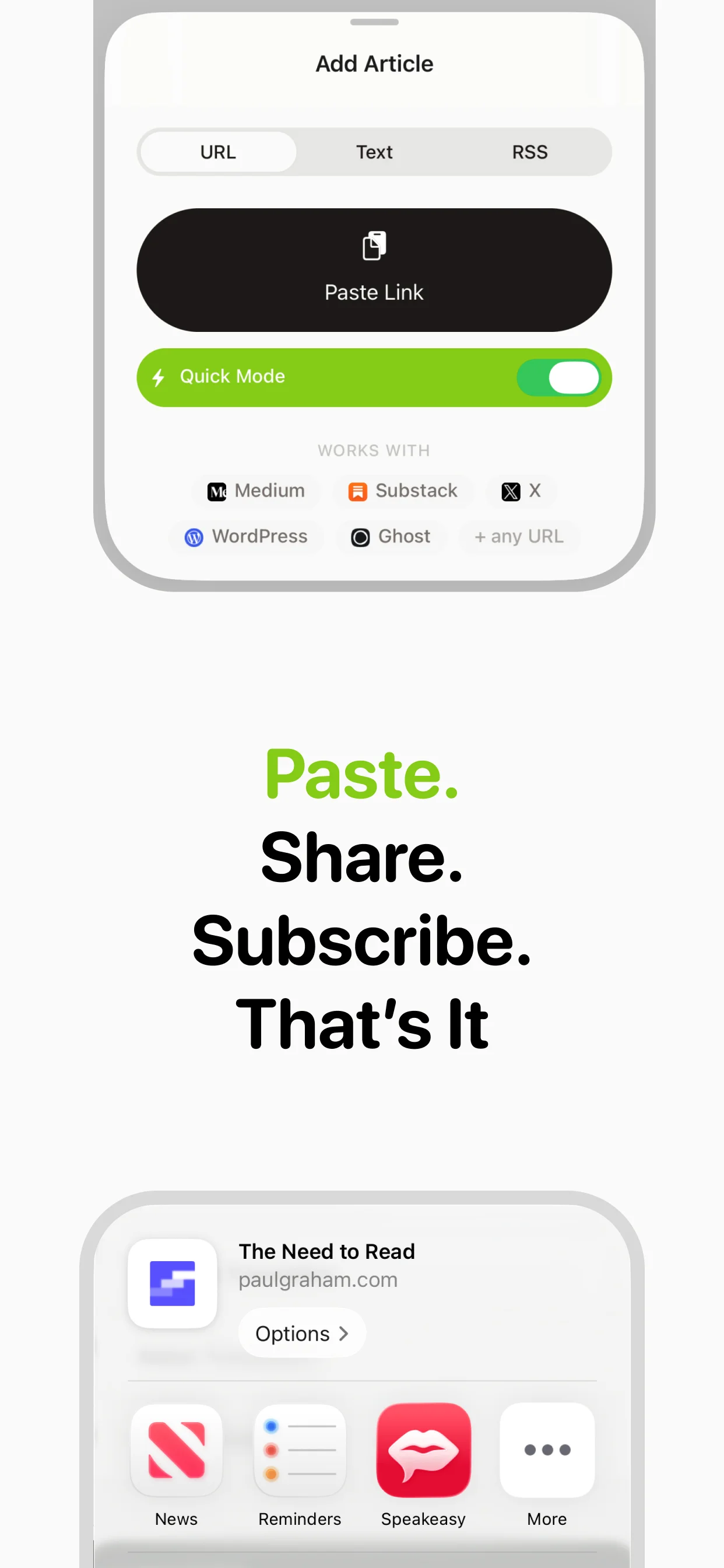

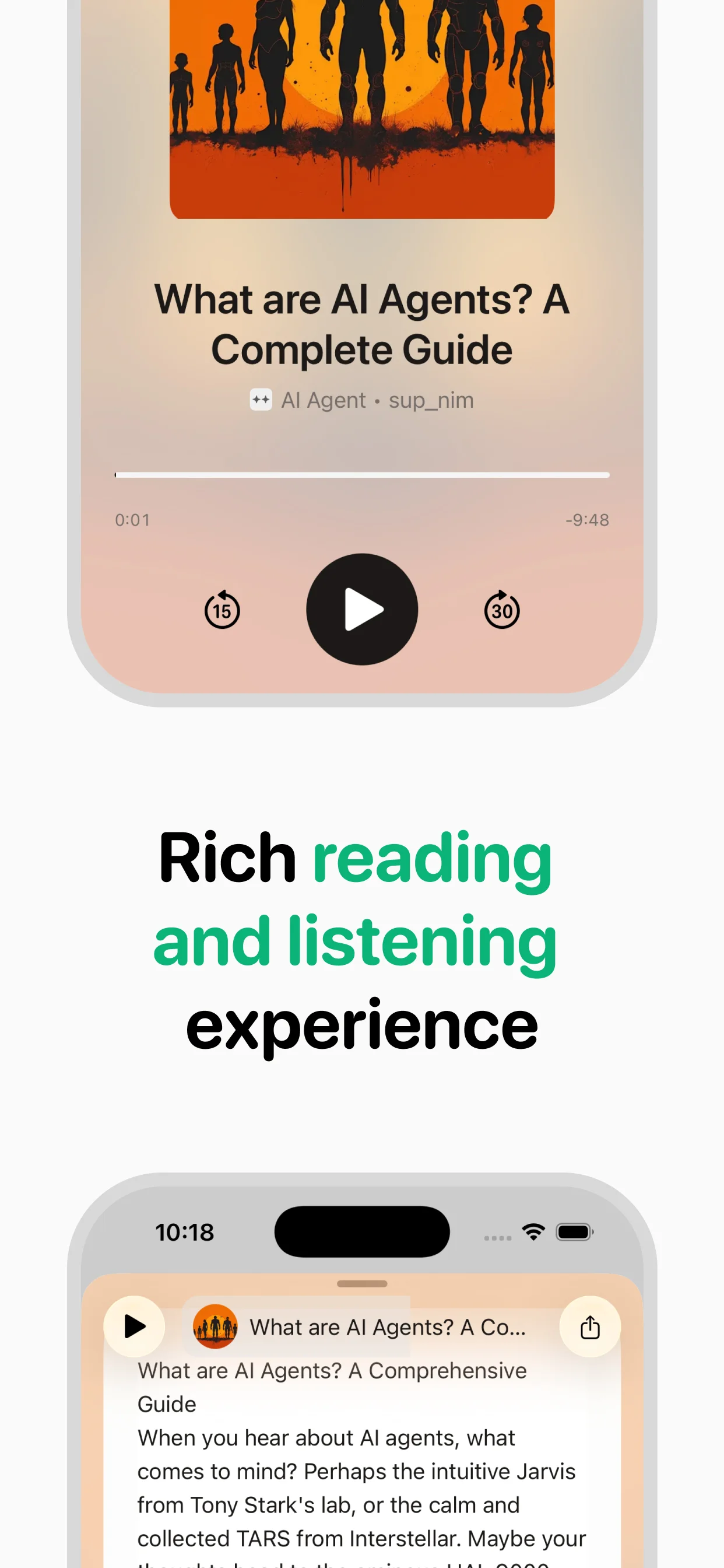

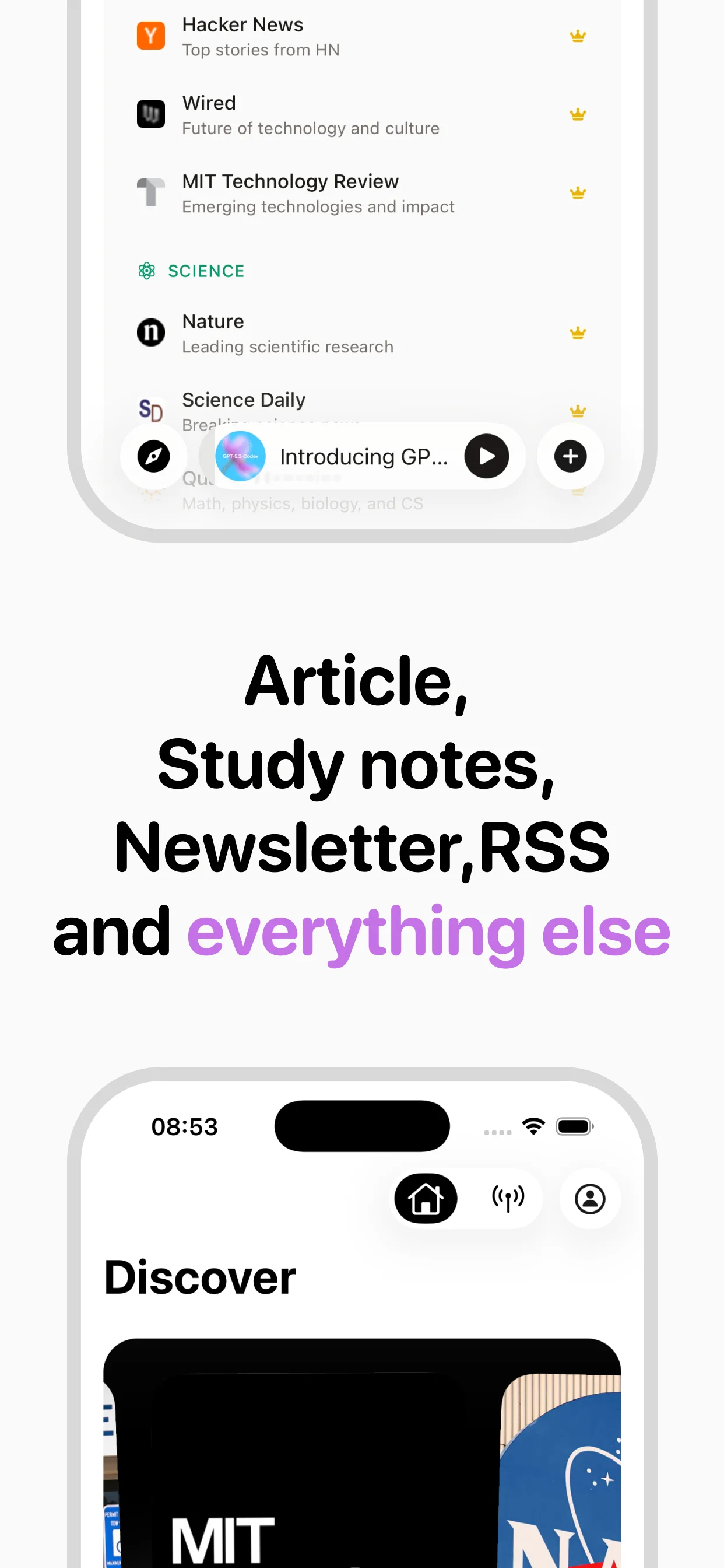

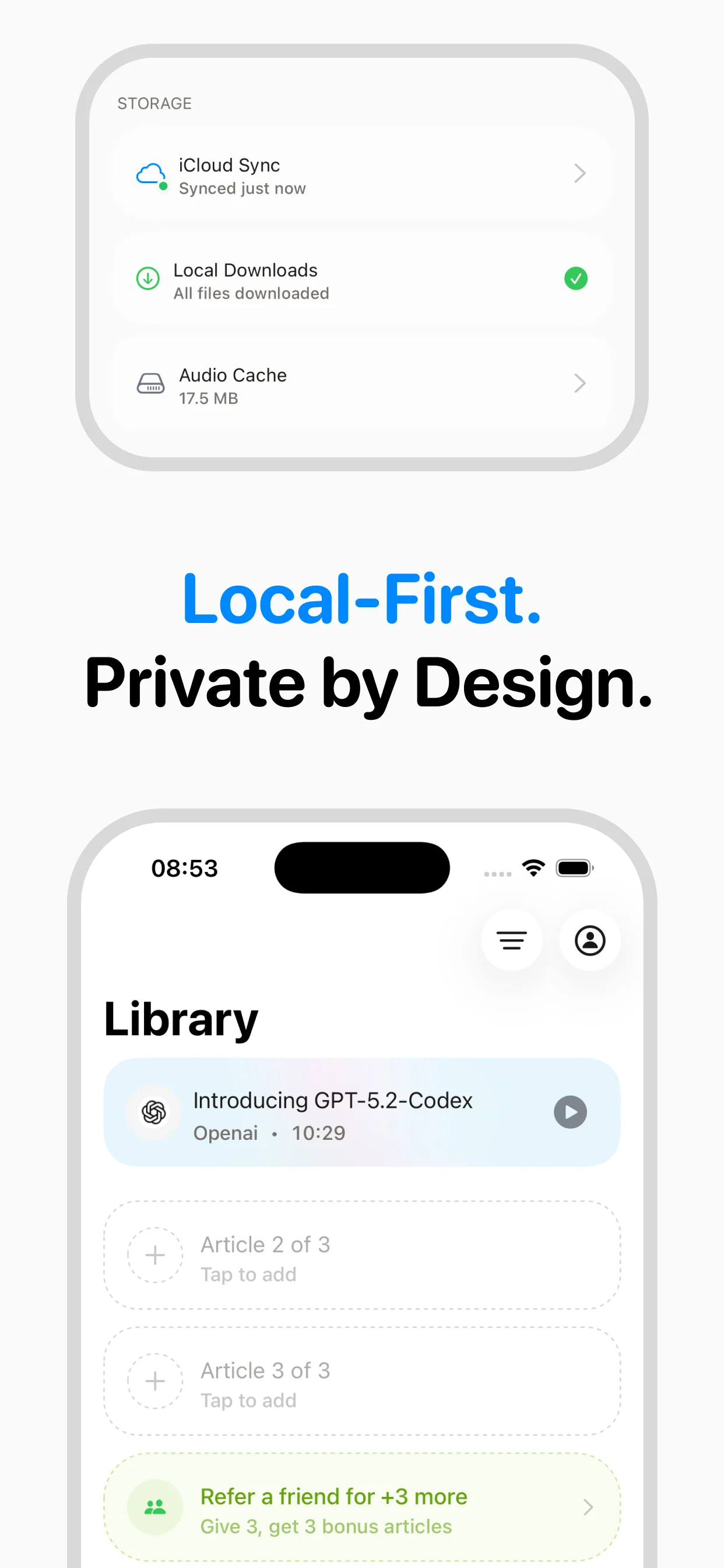

Turn any article into natural-sounding audio. Paste a link, press play, and stay informed while you move.

Coming soon on Android