Neural TTS vs Traditional TTS

Why modern AI voices sound human while older systems sound like robots — the technology explained.

Three generations of TTS

Text-to-speech technology has evolved through three distinct generations: formant synthesis (1960s-1990s), concatenative synthesis (1990s-2010s), and neural synthesis (2016-present). Each generation solved problems the previous one couldn't, and understanding the differences explains why the voice quality gap is so dramatic.

Formant synthesis: the robotic era

Formant synthesizers model the human vocal tract mathematically, generating sound by simulating the resonant frequencies (formants) of speech. Think of the voice from Stephen Hawking's speech computer or early GPS devices. These systems are fast, compact, and can run on minimal hardware, but they sound distinctly robotic — monotone, buzzy, and lacking natural rhythm. They're still used in some accessibility tools where hardware resources are limited.

Concatenative synthesis: the splice era

Concatenative systems record a human speaker saying thousands of phoneme combinations, then stitch together the appropriate segments for any given text. This produces more natural timbre (since it uses real human recordings) but introduces audible "seams" between segments — slight discontinuities in pitch and timing. The voice quality varies: common phoneme combinations sound great, while rare ones can sound strange. These systems need large databases (often 10-20 GB of recordings) and can't easily modify voice characteristics.

Neural synthesis: the AI era

Neural TTS generates speech from scratch using deep learning models. Rather than following rules or splicing recordings, neural networks learn the entire mapping from text to audio end-to-end. The breakthrough came in 2016 with DeepMind's WaveNet, which generated audio sample-by-sample using a deep neural network. Since then, architectures have evolved rapidly: Tacotron, FastSpeech, VITS, and proprietary models from companies like InWorld and OpenAI. Neural voices capture natural prosody, breathing, emphasis, and emotional tone because they learn these patterns from data rather than having them programmed.

Quality comparison by the numbers

On the MOS scale (1-5), formant synthesis typically scores 2.0-2.5. Concatenative synthesis scores 3.0-3.8 depending on content. Neural synthesis scores 4.0-4.5, approaching the 4.5-4.8 range of professional human narrators. The improvement isn't linear — neural TTS was a step change. In blind tests, listeners frequently cannot distinguish the best neural voices from human speakers for passages under 30 seconds.

Speed and cost trade-offs

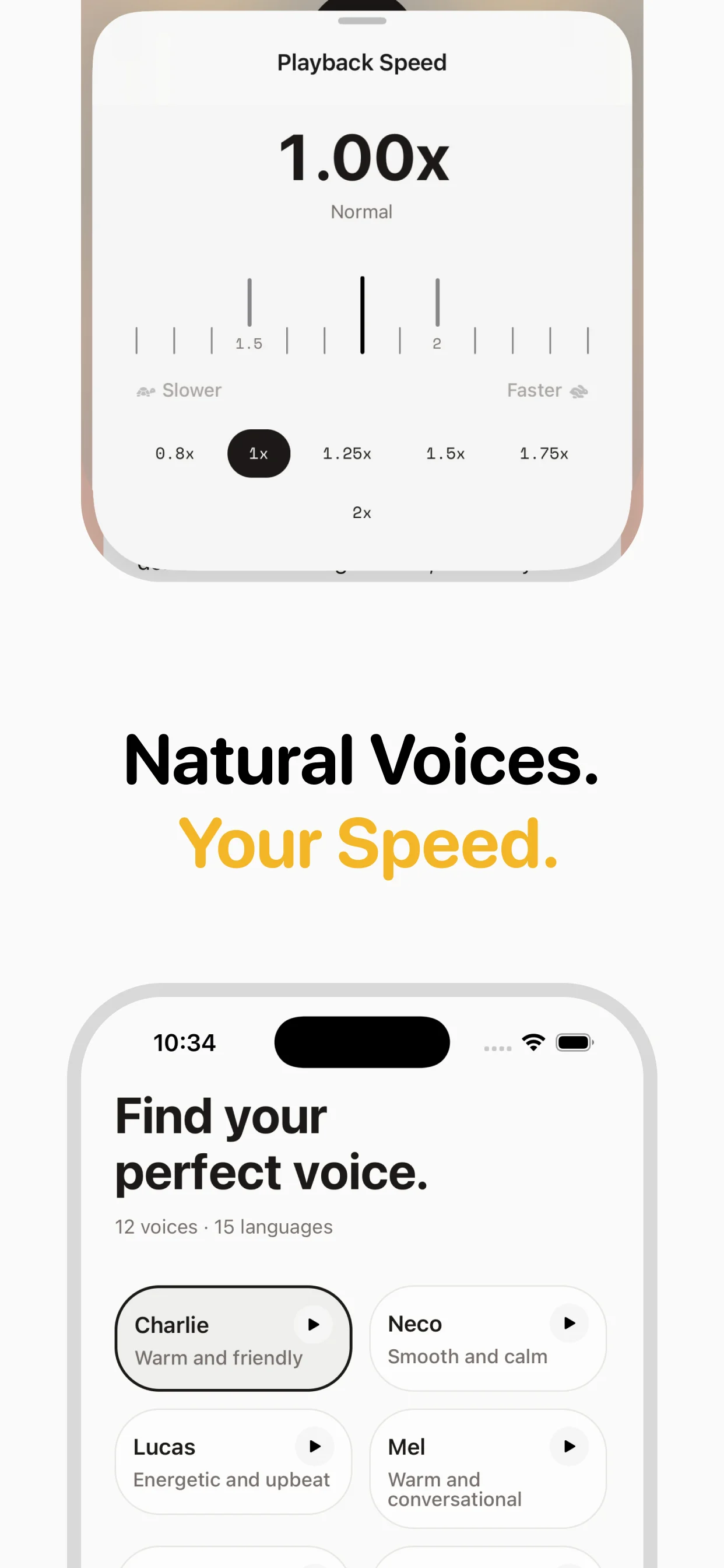

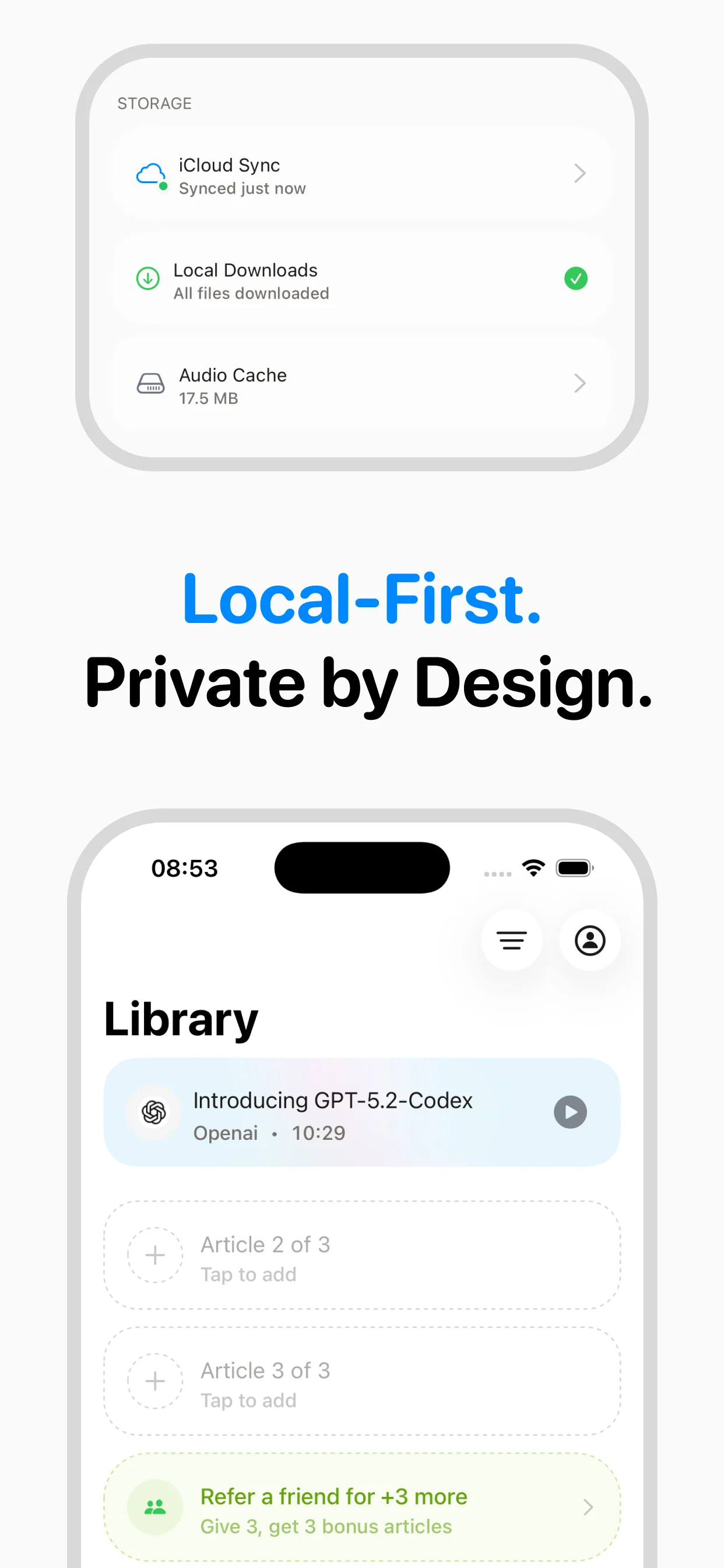

Traditional synthesis is faster and cheaper — formant synthesis runs in real-time on a microcontroller. Concatenative synthesis needs more storage but is still fast. Neural synthesis requires GPU hardware and significantly more computation. However, inference optimization has made neural TTS practical: modern models generate speech 10-100x faster than real-time on a standard GPU. Services like speakeasy handle the infrastructure so you get neural voice quality without managing any hardware.

Frequently asked questions

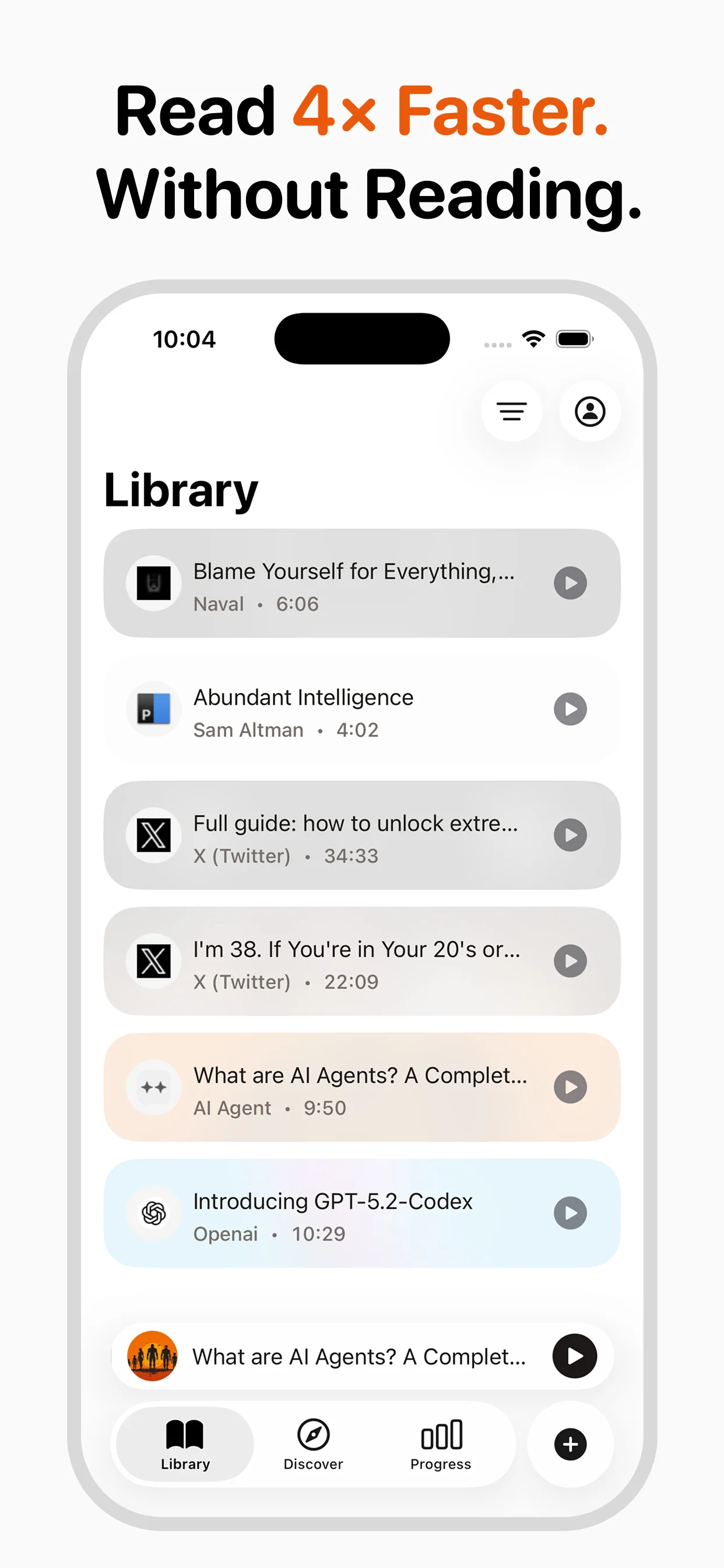

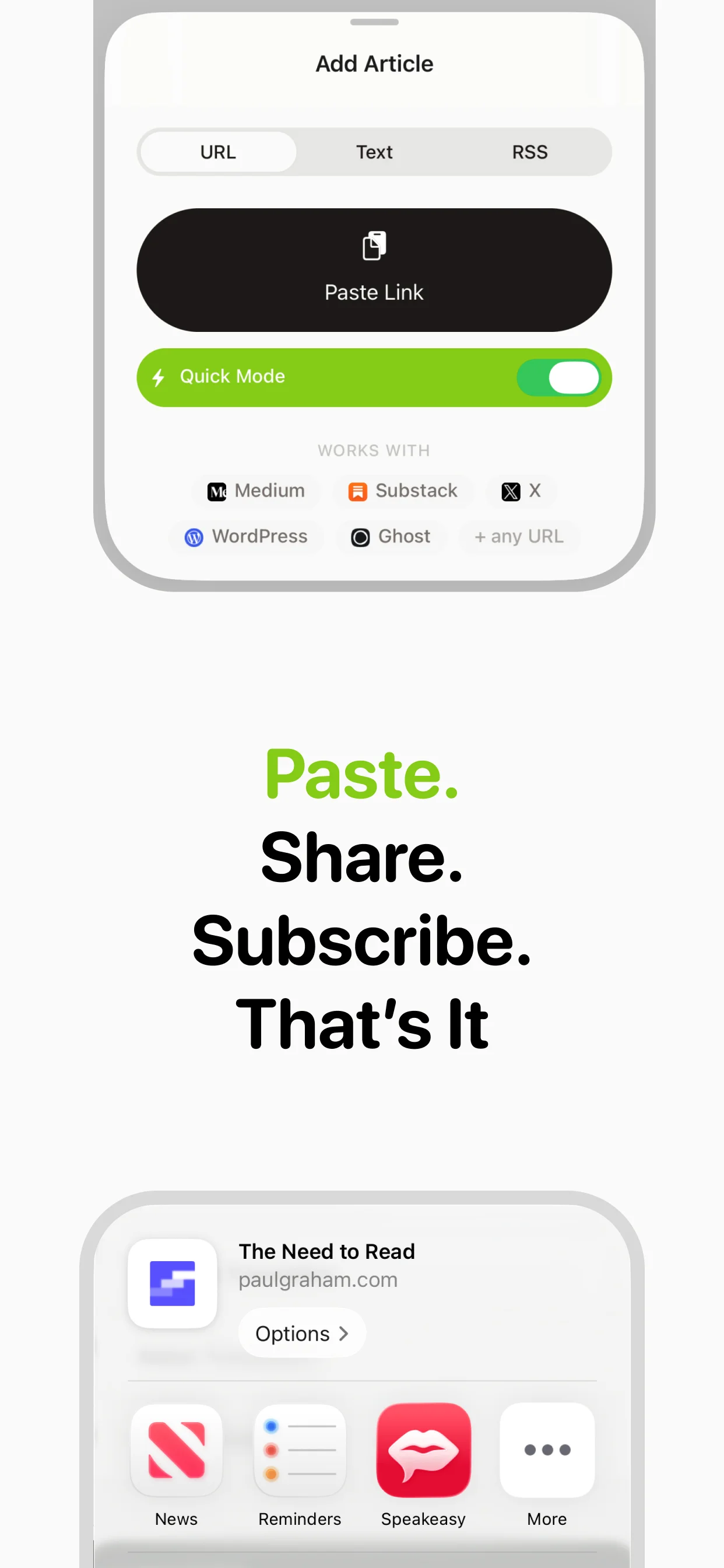

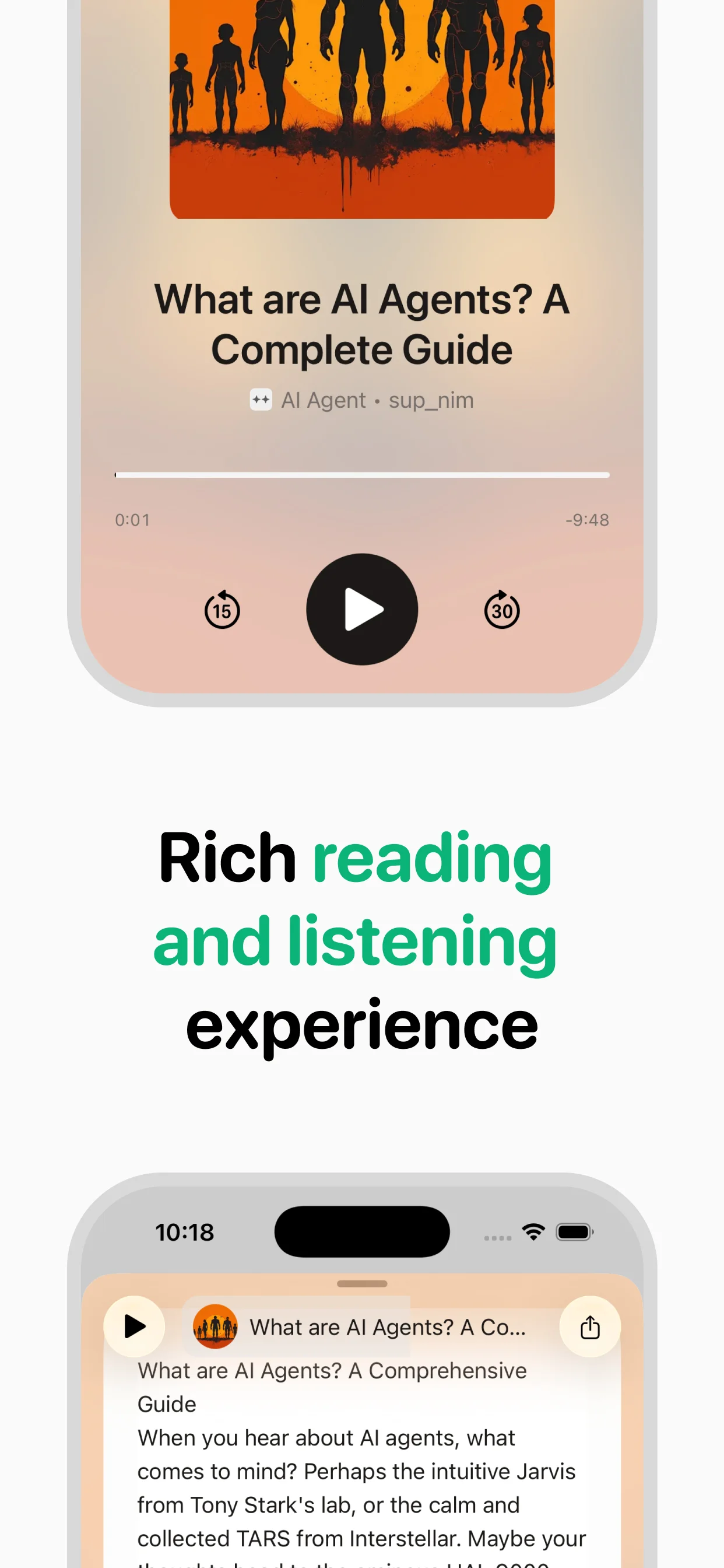

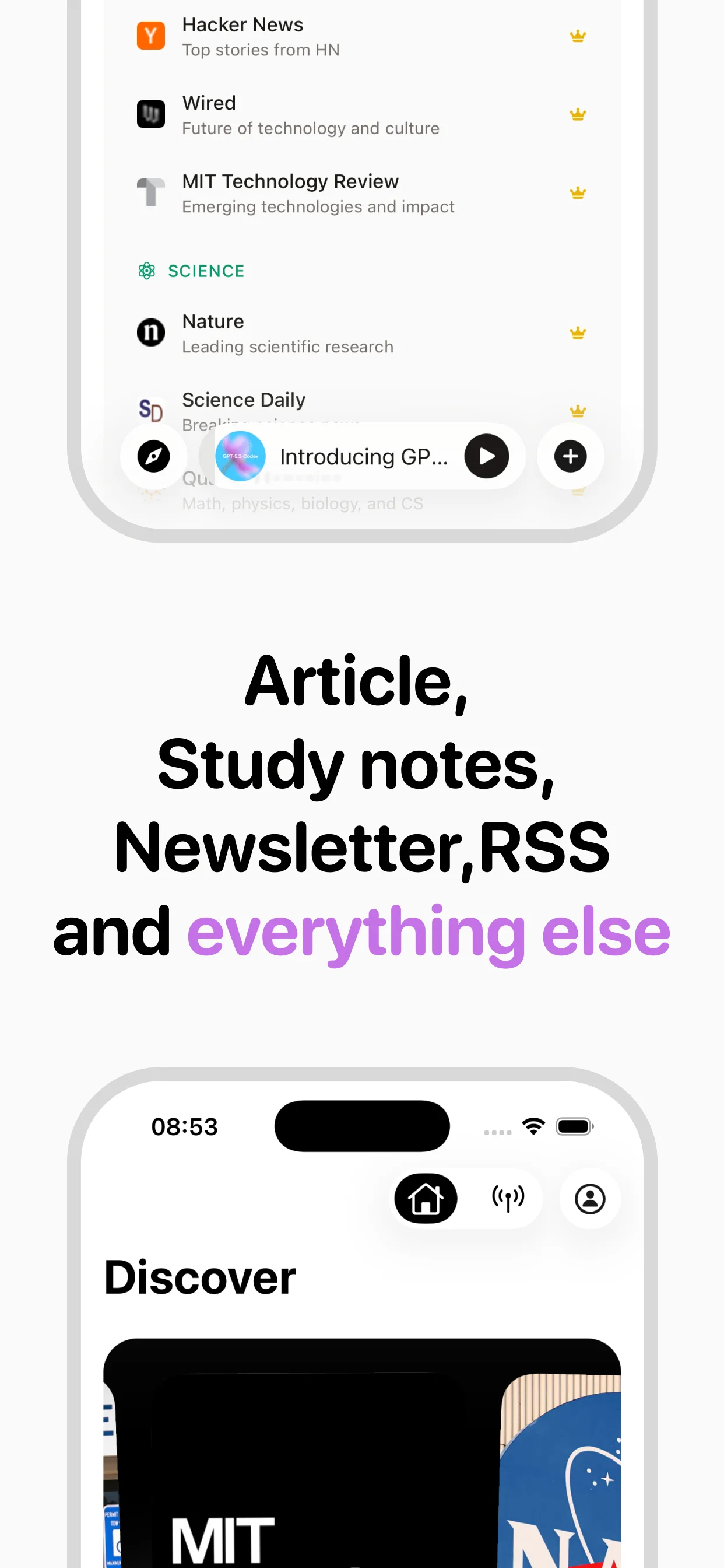

Turn any article into natural-sounding audio. Paste a link, press play, and stay informed while you move.

Coming soon on Android